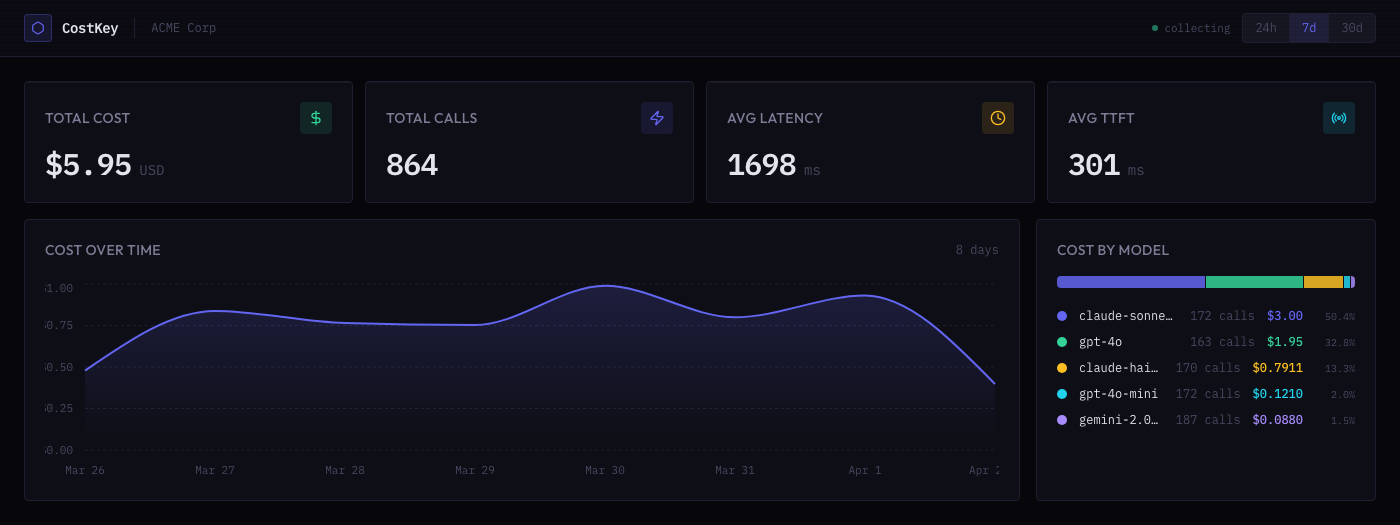

AI costs, down to the function.

Your OpenAI dashboard says you spent $2,000. CostKey tells you generateSummary() in src/features/search.ts:47 spent $1,200 of that — with one CLI command.

Open source SDKs on GitHub · npm + PyPI

Already using Portkey, Helicone, or LiteLLM? CostKey runs on top. Same setup command. No proxy changes.